Are AI Tools Enhancing Human Potential or Replacing Effort?

They Say AI Is the Future, But Is It?

AI is being experimented within every industry. From writing tools to coding partners, from creative design generators to legal research assistants—the names vary, but the response is nearly always the same. People ask, “Why wouldn’t we use AI if it can do this?” The statement comes out fast, almost automatically, as though it were the most obvious response.

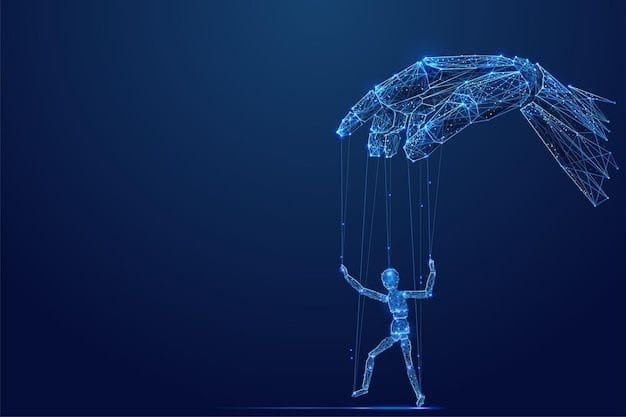

But think about what that actually means. Saying AI is beneficial is predicated on our understanding of its long-term effects. It implies that complicated and time-consuming tasks are better left to machines. That may appear simple, but it gets more complicated the more you examine it. AI transforms how people learn, work, and assess effort; it is more than just a tool. Its influence extends to the people using it, the institutions implementing it, and the assumptions we make about skill, competence, and value.

The Drive for Efficiency

At the heart of the AI debate is a fundamental human instinct: efficiency. Students, professionals, and creatives all face limits—deadlines, performance expectations, and measurable outcomes. AI promises to accelerate output and reduce friction. A task that once took hours can now be done in minutes.

That promise is powerful. It is easy to see why adoption has skyrocketed. Who would reject a tool that delivers faster results, more polished outputs, or immediate analysis? Yet efficiency comes with trade-offs. Cognitive psychology shows that skill develops through effort, repetition, and problem-solving. When AI performs the work for us, those processes weaken.

Early adopters often experience a confidence boost. The initial productivity gains feel like mastery. Over time, however, gaps in understanding emerge. Students may struggle to write independently. Professionals may produce polished work but fail when asked to analyze without AI. The tools are fast, but human judgment can lag behind.

Dependence or Amplification?

One of the most common assumptions about AI is that it replaces effort. It does not. Dependence grows quietly. A writer who relies on AI for every draft may struggle to organize ideas without prompts. A student using AI to solve math problems may lose conceptual understanding. Convenience can become substitution.

Data on performance without AI shows a consistent pattern. Individuals who use AI as a crutch perform worse when it is unavailable. Skills like critical thinking, creative problem-solving, and quantitative reasoning erode when effort is bypassed. If the goal is human growth, reliance on AI carries a real cost.

At the same time, there is a path for AI to amplify human potential. When tools are used to explore possibilities, analyze information, or test ideas, humans gain speed without losing control. The difference is subtle but critical: AI as a partner versus AI as a replacement.

The Risk of Skill Loss

Even if AI boosts output, the consequences of reliance can be permanent. Time spent outsourcing problem-solving is time not spent building human capability. Knowledge and skills not developed cannot be restored automatically.

Industries already see this effect. Junior designers increasingly rely on AI for concept sketches, limiting their ability to develop a personal style. Coding students using AI for debugging may miss understanding underlying logic. Legal interns using AI for research can produce documents quickly, but comprehension may remain shallow. Output looks polished, but the ability to reason independently weakens.

In education, this becomes especially stark. Students completing essays with AI may score well, but they struggle when asked to craft arguments without the tool. The skill atrophy is subtle at first, but over time, it compounds. Machines cannot repair what has been lost.

The Human Dimension

AI tools are not humans. They do not learn context the way people do. They cannot replicate judgment formed through experience. The same tool used by two people produces different outcomes because human oversight, understanding, and intent shape results.

It is easy to rely on AI when work can be reduced to prompts. It becomes harder when independent judgment is required. Dependence subtly devalues human skills—critical thinking, problem-solving, creativity, and analysis. The danger is that society may begin to undervalue these traits as AI becomes more capable.

This is not about condemning AI; it is about understanding its impact. Tools amplify human potential when used correctly, but when misused, they diminish what makes humans capable.

The Ethics of Outsourcing Effort

AI also raises ethical questions. If students or employees present AI-generated work as their own, does it constitute dishonesty? How much oversight is enough to prevent misuse? In some industries, the line is clear. In others, it is murky.

Educators face tough choices. Should they ban AI outright, risk underprepared students, or integrate it as a learning tool? Corporations wrestle with similar dilemmas: do they allow AI to handle client-facing work, risking skill erosion, or limit its use and sacrifice efficiency?

The stakes are not abstract. Overreliance on AI can compromise learning, weaken professional judgment, and erode personal capability. Misuse creates a workforce that appears competent on the surface but lacks depth underneath.

The Pressure to Perform

AI also reflects a deeper social dynamic: the pressure to perform. In workplaces, students face constant comparison. Deadlines and output expectations shape behavior. AI offers an escape from these pressures—a way to produce more, faster, without investing proportional effort.

This can be seductive. The initial gains reinforce reliance. Over time, individuals may prioritize efficiency over mastery, expedience over understanding. Society rewards output, not process, creating a feedback loop that encourages overdependence.

Even experts face this tension. Doctors using AI diagnostics may rely on suggestions without questioning them. Engineers may trust AI calculations without full verification. The tools can improve accuracy, but they also risk dulling judgment and oversight.

The Long-Term Implications

The impact of AI on human capability is slow and cumulative. Tools that provide immediate benefit can create long-term deficits in skill, knowledge, and independence. Industries may gain efficiency, but the workforce loses resilience. Education systems may produce high-performing students who cannot think critically. Creative fields may yield visually stunning work but lack originality.

The question is not whether AI will change the way we work—it already has. The question is whether humans will adapt responsibly or allow essential skills to erode. AI amplifies potential when humans remain active participants in the process. It diminishes potential when humans defer judgment and effort to machines.

Where That Leaves Us

So, does AI enhance human potential, or does it replace effort? The answer is not simple. AI is powerful. It accelerates, analyzes, and automates. It allows humans to achieve more in less time. But its benefits come with trade-offs. Overreliance risks eroding human skills that cannot be recovered automatically. Convenience can become substitution, output can outpace understanding.

AI is not inherently good or bad. Its value depends entirely on human choices. When used as a partner, it extends what we can do. When used as a replacement, it diminishes what we can be. The tools do not fail—they reflect the priorities, oversight, and effort humans bring.

The hardest truth may be that AI is less about efficiency and more about judgment. It exposes how humans manage effort, learning, and growth. Mastery, skill, and understanding do not come from automation—they come from engagement, decision-making, and persistence. AI can help us—but whether we remain capable of doing the work ourselves, even when we do not have to, is entirely up to us.